Groth16 is the GOAT of zero-knowledge proof (ZKP) systems, remarkable given that it was invented in 2016 (as the name suggests), right at the start of the most impressive decade of breakthroughs in ZKPs. The downside of such a neat and compact scheme is that nobody seems to be able to understand it intuitively. Or at least, if anyone does, they haven't synthesized it for the rest of us! We're hoping to change that by bringing you an intuition-level explanation of the scheme. So read on if you finally want to crack this one open.

The Quick Skippable Introduction

First, what's Groth16 and why do people still care? The answer fits in one sentence: it's a SNARK with constant-size proofs that requires a circuit-specific trusted setup. Actually, when I said that, I also brought up its downsides... So let me explain both:

- Upside. It's a Succinct Non-interactive Argument of Knowledge. Actually, it's really succinct! So succinct that it doesn't just fit the definition of succinct which requires that proofs are at most logarithmic in size, it blows the roof off with proofs of constant size: always three group elements. If instantiated with the BN254 curve (which it is in practice), its proofs are 128 bytes, close to digital signatures in use today, and much smaller than the hundreds of KB that some other trendy proof systems can have.

- Downside. It requires a trusted setup, essentially meaning that in practice people perform a multi-party computation protocol as part of a ceremony to ensure that no one learns the toxic parts of the generated parameters. These parts we want no one to learn about would allow an attacker to forge proofs, hence why they are called toxic. Not only that, the setup is circuit-specific, so it has to be redone if a bug is detected.

But the upsides are so OP that they seem to beat the downsides for a lot of people, so much so that Groth16 is used all over the place even in protocols that use more modern and flexible proof systems. The typical usage now is to wrap the main proof system with Groth16 at the end (essentially Groth16 proves the verification program for their proof system, recursion!)

By the way, we're going to work our way to showing how the prover creates a proof $A, B, C$ and the verifier checks the equation:

$$A \cdot B = [\alpha] [\beta] + \gamma \cdot \sum_{i=0}^{l} a_i [\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\gamma}] + C [\delta]$$It's a mouthful, don't look at it too much, but hopefully by the end of the post you'll understand what's happening here!

Step 1: Speaking the Language of R1CS

Before we can talk about the core of Groth16, I need to briefly mention that what Groth16 proves is R1CS constraints. I don't want to spend too much time on that, but essentially, different proof systems use different arithmetizations, which allow you to write an arithmetic circuit (made out of multiplication and addition gates) in some shape that can be proven. Some systems use Plonkish or AIR arithmetization, Groth16 uses R1CS.

We have a whole whiteboard session on all of these arithmetizations here, but in order to make this post self-contained, let's remember together what R1CS looks like.

The way I think about it is pretty simple, our R1CS circuit is written as:

-

a long memory array $a$ that is used to store the value 1 (as first entry), the public inputs and outputs, and then all the other (secret) values used in the circuit

-

a number of "R1CS" gates, let's say $n$ of them, each described by three vectors that tell us which entries from that memory array are used in the gate (and where they are used)

The "R1CS" gate essentially multiplies two linear combinations of the memory array entries, and checks that the multiplication is equal to another similar linear combination.

For example, for a single gate described by the vectors $u, v, w$, Groth16 will enforce that:

$$(\sum_i a_i u_i) (\sum_i a_i v_i) = (\sum_i a_i w_i)$$We can look at a few examples to see how that would work:

- to enforce that

a[3] + a[4] = 5you would setu, v, wto zero vectors exceptu[3] = u[4] = 1, v[0] = 1, w[0] = 5 - to enforce that

a[3] * a[4] = 4 * a[5]you would setu, v, wto zero vectors exceptu[3] = 1, v[4] = 1, w[5] = 4

The other typical way to represent R1CS, which you might have seen elsewhere, is to see all the gates as three matrices U, V, W and the constraints we enforce as $$Ua \odot Va = Wa$$ (where $\odot$ represents the Hadamard product)

But of course, the best way (and my favorite way) of seeing all of this is through four tables: U, V, W, a, where a is single-column and U, V, W all have as many columns as a has entries.

R1CS or QAP?

There's one more step. Groth16 calls its "view" of R1CS a QAP, because it needs to look at the R1CS arithmetization as a polynomial equation in order to prove it. To "polynomialify" the R1CS stuff above (and make it a QAP), what we can do is to convert every column of every table into a polynomial by pretending that every entry in a column is an evaluation at some point.

For example, we can pretend that the first column entries represent a polynomial $u_0$ such that:

- $u_0(0) = 3$

- $u_0(1) = 6$

- $u_0(2) = 11$

which we can easily (trust me) interpolate into the polynomial $u_0(x) = x^2 + 2x + 3$.

This way, we can represent the previous R1CS equation for all gates as the following equation:

$$(\sum_i a_i u_i(x)) (\sum_i a_i v_i(x)) = \sum_i a_i w_i(x)$$We only care about this equation being true for the points $0$, $1$ and $2$ in our example, so we can write it more generally as

$$(\sum_i a_i u_i(x)) (\sum_i a_i v_i(x)) = \sum_i a_i w_i(x) + t(x) q(x)$$where $t(x) = (x - 0)(x - 1)(x - 2)$ and $q(x)$ is a polynomial of degree $n-2$ (make sure you understand why)

we often call $t$ the vanishing polynomial and $q$ the quotient polynomial. Lots of ZKP schemes spend most of their time proving that such a quotient polynomial exists

The Schwartz-Zippel Trick: Checking Polynomials at a Single Point

Let's travel into the future. Eventually Groth16 will ask the prover to produce three proof elements $A$, $B$, and $C$ that will almost look like this:

- $A = \sum_i a_i u_i(x)$

- $B = \sum_i a_i v_i(x)$

-

$C = \sum_i a_i w_i(x)$

($C$ will actually look much different, but let's pretend for now)

and the verifier will eventually check something that will almost look like the following:

$$A \cdot B = C + t(x) q(x)$$This should look like the R1CS equation we saw above, let's call that the verifier's QAP check.

Importantly, like every succinct proof system we know of, the polynomial identity we're going to check will eventually be evaluated at a random $x$ instead of being checked at every $x$ (e.g. $x=0, x=1$, etc.).

Why's that? In a nutshell, the number of evaluation points $x$ we could randomly choose is very large, there are a lot of them. On the other hand, if the left-hand side and the right-hand side polynomials aren't equal, then they won't match when evaluated on most of these points.

So by choosing a random point, and checking if the polynomial identity holds when evaluated at this random point, we check that it is true everywhere with very high probability. This is what the Schwartz-Zippel lemma is about (more precisely it's about quantifying the risk of being fooled here).

Hiding the Evaluation Point in the CRS

Pretty much all proof systems use this "check the polynomial identity at a random evaluation point" trick, but while some produce the evaluation for you to check them in the clear, Groth16 checks them hidden (or "hidden in the exponent" like some people say).

Doing it hidden allows Groth16 to choose that point ahead of time, which is one of the main reasons why Groth16 is so efficient. Ahead of time means that the random $x$ will happen during the (trusted) setup, and will be encoded in the parameters that both the prover and verifier can use.

We usually call these parameters generated during the trusted setup the Common Reference String (CRS), or sometimes the Structured Reference String (SRS) to emphasize that it's not just random stuff but has algebraic structure (both terms are in current use).

Intuitively, the more information you encode in the CRS at setup, the more efficient your scheme seems to be. Groth16 encodes both that point, and also the circuit in the CRS (which we will see later). As we saw earlier this also comes with the downside of having a trusted setup, and worse, having a circuit-specific trusted setup.

To do it hidden, we use elliptic-curve commitments, that is if we have a generator $G$ that produces a subgroup, we can obtain a commitment of $s$ by producing $s \cdot G$. To simplify notation I will write $[s]$ from here on.

So at this point, let's just assume that the trusted setup has produced the evaluation of the polynomials that fully describe our circuit:

$$\text{CRS} = \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i$$Then this allows the prover to produce commitments to all points

- $A = \sum_i a_i [u_i(x)] = [\sum_i a_i u_i(x)]$

- $B = \sum_i a_i [v_i(x)] = [\sum_i a_i v_i(x)]$

- $C = \sum_i a_i [w_i(x)] = [\sum_i a_i w_i(x)]$

The verifier can then check something like $A \cdot B = C + [t(x)] \cdot [q(x)]$

In Groth16, they actually publish the powers of $x$ as part of the CRS instead of $u_i(x), v_i(x), w_i(x)$, which still allows the prover to produce the same proof elements.

This scheme is not secure by itself, and at this point we haven't talked about how to produce the $[t(x)]$ and $[q(x)]$ commitments. We'll address both of these in a bit, there is something more pressing to address right now: we can't even do a multiplication of commitments.

Pairings: the Only Way to Multiply Commitments

Now that we are using commitments, we're in a bit of a pickle. The proof elements are now computed as commitments, but the verifier can't compute the check $A \cdot B = C + [t(x)] \cdot [q(x)]$ we saw above anymore, as there is a multiplication between two commitments, and we generally don't know how to do that.

Actually, that's not true, we have one way of doing it: we just need to use elliptic curve pairings!

I don't want to explain too much about pairings here so I'll just say this, if you have two elliptic curve commitments $[a]$ and $[b]$, you can obtain a new commitment $[a \times b]$ using pairings!

People write a multiplication with two commitments (using pairings) like so:

$$e([a], [b]) = [ab]$$Although, the result is in a different group (which we call the target group), so we generally write it like so:

$$e([a], [b]) = [ab]_T$$In the common instantiation with curve BN254 (not all curves support pairings), the pairing is a "type 3" pairing, so the arguments also have to be in different curves/groups generated by $G_1$ and $G_2$, so you'll usually see the above equation written like so:

$$e([a]_1, [b]_2) = [ab]_T$$But Groth16 is proven to be secure in both the symmetric and asymmetric pairing settings, and so in the rest of this post we will pretend that everything in the CRS is in the same group $G_1$:

$$e([a]_1, [b]_1) = [ab]_T$$And since I really want us to abstract pairings, just assume I'm using pairings when you see a multiplication between two commitments $[a] \cdot [b]$

The CRS as a Box of LEGOs

OK so now we have a first sketch for our scheme. The trusted setup produces the following CRS:

$$\text{CRS} = \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i$$and the prover produces the three proof elements using this commitment:

- $A = \sum_i a_i [u_i(x)] = [\sum_i a_i u_i(x)]$

- $B = \sum_i a_i [v_i(x)] = [\sum_i a_i v_i(x)]$

- $C = \sum_i a_i [w_i(x)] = [\sum_i a_i w_i(x)]$

the verifier can then perform the check $A \cdot B = C + [t(x)] [q(x)]$ using pairings, and checking that the resulting commitment on the left is equal to the resulting commitment on the right.

In order to obtain everything in the target group, the commitment $C$ also has to be part of a pairing. So the verifier will have to perform $e([C], [1])$ or $e([1], [C])$ to move $C$ into the target group.

Groth16 uses the first pairing ($C$ is the left argument) because when instantiated with curves like BN254, elements of the $G_1$ group are half the size of elements of the $G_2$ group. This decision makes proofs shorter!

Now we need to talk about what we are not doing:

-

Is the prover using the same $a_i$ (witness) across all proof points?

-

Is the prover really producing $u_i$ in A (and $v_i$ in B, and $w_i$ in C?)

-

What about the public inputs? and the first value $a_0 = 1$?

Don't worry, we're going to answer them all.

Importantly at this point, we have to assume that the prover is limited in how it can construct its proof elements. While it can produce commitments to its own random values, it can't extract elements of the CRS, or freely multiply them together.

For this reason, the proof elements $A, B, C$ are always seen as linear combinations of the CRS elements (you can also imagine that $[1]$ is part of the CRS).

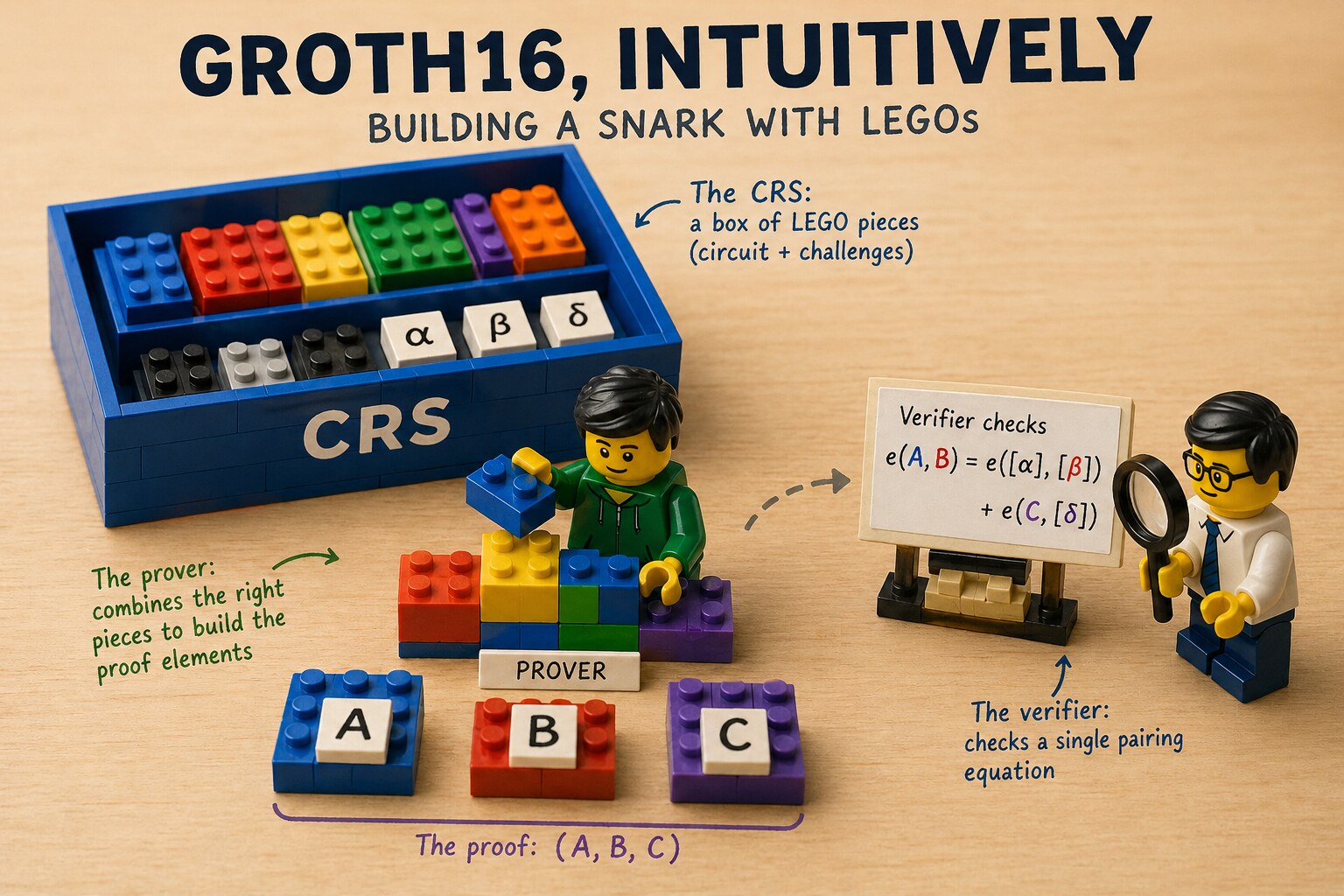

This is basically like using LEGOs! If the CRS is a box of LEGOs, the prover is limited to the pieces they've been given when creating the proof elements.

This analogy goes pretty far, as this is pretty much how Groth16 shows that the whole scheme is secure: the prover points $A, B, C$ are constructions that are based only on the LEGO pieces from the CRS, and by looking at how the verifier check behaves one can imagine what LEGO pieces must have been used in the construction of the prover points.

Delegating the Quotient Polynomial to the Prover

Let's now explain how to produce $[t(x)]$ and $[q(x)]$ in the verifier equation.

We could simply add the powers of $x$ ($[1], [x], [x^2], \cdots$) as part of the CRS, so that both the verifier can produce $[t(x)]$ by themselves, and the prover can produce $[q(x)]$.

This is almost what we'll do, but notice that since the vanishing polynomial $t(x)$ is dictated entirely by the circuit, we could precompute that during the setup and just add $[t(x)]$ in the CRS.

But Groth16 goes one step further, it publishes enough LEGO pieces for the prover to produce the entire $[t(x)q(x)]$ polynomial directly from the CRS:

$$ \text{CRS} \mathrel{+}= \{[t(x)], [t(x)x], [t(x)x^2], \cdots, [t(x)x^{n-2}]\} $$So now, we can imagine that the prover gives us an extra proof point $D = [t(x)q(x)]$, but don't get used to it as later we will move that into $C$.

Keeping the LEGOs Apart with Separator Factors

Let's look again at our verifier equation and our CRS.

$$ \text{CRS} = \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i \cup \{[t(x)], [t(x)x], [t(x)x^2], \cdots, [t(x)x^{n-2}]\} $$Then we have a verifier "QAP check" that looks like that now:

$$A \cdot B = C + D$$where $D$ is computed as $[t(x)q(x)]$ as explained in the previous section.

Our LEGO analogy is sort of breaking here, because this new proof element $D$ we introduced can use anything from the CRS, and not just the pieces we wanted it to use.

The solution here is to use separating factors.

A separating factor works like this: during the trusted setup a random value is generated for the verifier to use, then its inverse is associated with the LEGO pieces we want to separate.

In our example, let's generate $[\delta]$ (a commitment to some random $\delta$ known to no one) during the trusted setup, and let's modify our CRS to multiply all the quotient polynomial LEGO pieces by $\delta^{-1}$:

$$ \text{CRS} = \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i \cup \{[\delta]\} \cup \{[\frac{t(x)x^i}{\delta}]\}_i $$And finally, let's modify the verifier check to remove $\delta^{-1}$ from the proof element $D$:

$$A \cdot B = C + D \cdot [\delta]$$This works! $D$ cannot use any other terms from the CRS as LEGO pieces (because otherwise they won't get "normalized" and $D$ will be all messed up). On the other hand, $A$, $B$, and $C$ can't use LEGO pieces from $\{[\frac{t(x)x^i}{\delta}]\}_i$ without introducing this annoying $\delta^{-1}$ term.

There are other issues with $A$, $B$, and $C$ though... they don't have to use the same witness $a_i$, and can use any of the commitments of $u_i$, $v_i$, or $w_i$. Let's tackle that next.

Forcing the Prover to Use the Same Witness Everywhere

OK so remember that the three points $A, B, C$ are computed as:

- $A = \sum_i a_i [u_i(x)] = [\sum_i a_i u_i(x)]$

- $B = \sum_i a_i [v_i(x)] = [\sum_i a_i v_i(x)]$

- $C = \sum_i a_i [w_i(x)] = [\sum_i a_i w_i(x)]$

and now we want to check not only that they use the same $a_i$ (the same linear combination), but they also use the right elements $u_i, v_i, w_i$ from the CRS. We could use separator factors at this point, but Groth16 does something a bit different.

The secret to everything in cryptography was to take random linear combinations of things.

Let's try that and see what happens. Using random values $\alpha$ and $\beta$ on the three proof elements:

$$ \beta A + \alpha B + C $$we get, presumably, if the points have been produced correctly by the prover, something that should look like that:

$$ \beta (\sum_i a_i [u_i(x)]) + \alpha (\sum_i a_i [v_i(x)]) + \sum_i a_i [w_i(x)] $$Oh but notice, we can rewrite that with $a_i$ on the outside:

$$ \sum_i a_i (\beta [u_i(x)] + \alpha [v_i(x)] + [w_i(x)]) $$Doesn't this look like something we could check as the verifier?

So let's add a new witness consistency check (in addition to our previous QAP check).

But also, since we don't want the prover to create proof points that contain inverses of these challenges, the challenges have to be introduced as commitments in the CRS.

$$ [\beta] A + [\alpha] B + C = \sum_i a_i \cdot ([\beta] [u_i(x)] + [\alpha] [v_i(x)] + [w_i(x)]) $$The right-hand side has a bunch of stuff that could be pre-computed in the CRS... And if we were to introduce these new commitments in the CRS, we could get the prover to produce the right-hand side for us without revealing any of the witness values $a_i$. So we could just add these to the CRS:

$$ \text{CRS} \mathrel{+}= \{[\beta u_i(x) + \alpha v_i(x) + w_i(x)]\}_i $$and have the prover produce a new proof point (that we will later get rid of... be patient)

$$ E = \sum_i a_i [\beta u_i(x) + \alpha v_i(x) + w_i(x)] $$and of course, we have to use another separator factor $\epsilon$ to force $E$ to use only the right LEGO pieces. So we fix the last update to the CRS with:

$$ \text{CRS} \mathrel{+}= \{[\epsilon]\} \cup \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\epsilon}]\}_i $$Now our verifier performs two checks:

- a QAP check: $A \cdot B = C + D \cdot [\delta]$

- a witness consistency check: $[\beta] A + [\alpha] B + C = [\epsilon] E$

Not only do we have a check that the same witness $a_i$ is used across the proof elements, but we also enforce that $A$, $B$, and $C$ are of a specific shape (combinations of $u_i$, $v_i$, and $w_i$ respectively, which are the values that fully describe our circuit).

Merging Two Checks Into One

To recap, our CRS now looks like this:

$$ \text{CRS} = \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i \cup \{[\delta],[\epsilon]\} \cup \{[\frac{t(x)x^i}{\delta}]\}_i \cup \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\epsilon}]\}_i $$Notice that in the witness consistency check, there is the $C$ from the QAP check, which we could rewrite as $C = A \cdot B - D \cdot [\delta]$

Let's substitute this into the witness consistency check, we get:

$$ [\beta] A + [\alpha] B + A \cdot B = D \cdot [\delta] + [\epsilon] E $$This is now a single check! We are now checking that $A$ and $B$ and $A \cdot B$ are of the right shape.

We can do better, by noticing that the left-hand side looks like the multiplication of $([\alpha] + A)([\beta] + B)$ except that it's missing one term ($[\alpha][\beta]$). Let's add it to our check on both sides:

$$ [\alpha][\beta] + [\beta] A + [\alpha] B + A \cdot B = [\alpha][\beta] + D \cdot [\delta] + [\epsilon] E $$and now the left-hand side can be rewritten as our simpler multiplication

$$ ([\alpha] + A)([\beta] + B) = [\alpha][\beta] + D \cdot [\delta] + [\epsilon] E $$Since both $[\alpha]$ and $[\beta]$ are part of the CRS, it is enough to just check

$$ A \cdot B = [\alpha][\beta] + D \cdot [\delta] + [\epsilon] E $$and let the prover produce $A$ and $B$ with the correct shape from the get-go:

- $A = [\alpha] + \sum_i a_i [u_i(x)]$

- $B = [\beta] + \sum_i a_i [v_i(x)]$

One last thing: notice that there is no point in separating the CRS LEGO pieces for $E$ and $D$, so we can simplify the separator factor $\epsilon = \delta$ and aggregate both $E$ and $D$ under our new $C$ proof element (we missed you $C$!).

$$ \text{CRS} = \{[\alpha], [\beta], [\delta]\} \cup \{[u_i(x)], [v_i(x)], [w_i(x)]\}_i \cup \{[\frac{t(x)x^i}{\delta}]\}_i \cup \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\delta}]\}_i $$and now the verifier check is

$$A \cdot B = [\alpha] [\beta] + C [\delta]$$with:

- $A = [\alpha] + \sum_i a_i [u_i(x)]$

- $B = [\beta] + \sum_i a_i [v_i(x)]$

- $C = \sum_i a_i [\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\delta}] + [\frac{t(x)q(x)}{\delta}]$

Pinning Down the Public Inputs

This whole time we kinda ignored the public inputs, but these are important! The verifier not only wants to make sure that $a_0 = 1$ as we talked about previously (to make sure we can add constants in our circuit), but they also want to fix the $a_i$ that are part of the public input.

Groth16 does this by fixing the first $l+1$ of them (if we have $l$ public inputs) in the verifier check:

$$A \cdot B = [\alpha] [\beta] + \sum_{i=0}^{l} a_i [\beta u_i(x) + \alpha v_i(x) + w_i(x)] + C [\delta]$$But now notice that the prover can still mess with the public inputs by including terms $[\beta u_i(x) + \alpha v_i(x) + w_i(x)]$ for $i \leq l$ that are still available in the CRS!

No panic, we can still use our separator factors in order to separate these terms. Our CRS had this before:

$$ \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\delta}]\}_i $$we replace this with

$$ \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\delta}]\}_{i > l} \cup \{[\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\gamma}]\}_{i \leq l} \cup [\gamma] $$and change the verifier equation to

$$A \cdot B = [\alpha] [\beta] + \gamma \cdot \sum_{i=0}^{l} a_i [\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\gamma}] + C [\delta]$$Voila! This is the equation we had at the very beginning, hopefully you get how it works now :)

What About the Zero-Knowledge Part?

Oh yeah, I kinda skipped this part. It is fairly easy to show how to add zero-knowledgeness to the scheme, by having the prover generate random $r$ and $s$ and producing the proof elements as:

- $A = [\alpha] + \sum_i a_i [u_i(x)] + r [\delta]$

- $B = [\beta] + \sum_i a_i [v_i(x)] + s [\delta]$

- $C = \sum_i a_i [\frac{\beta u_i(x) + \alpha v_i(x) + w_i(x)}{\delta}] + [\frac{t(x)q(x)}{\delta}] + A s + B r - rs [\delta]$

This effectively randomizes $A$ and $B$ in an unpredictable way (making them look totally random), and cancels the changes in $C$ (which is uniquely determined by $A$ and $B$).

Why that's good enough is left for you to think about.